Timeline

July 1, 2022, midnight UTC

Oct. 31, 2022, midnight UTC

Data

The data for this competition is released under the CC BY-NC-SA 4.0 license. You may download the full set of data as a compressed zip file (~105MB) from Zenodo.

Contents

The dataset consists of 26 unprocessed and raw images taken by the on-board camera of OPS-SAT. Depending on the cropping, these images are about 2048x1944 in size and saved in .png format. The goal of this competition is to train an EfficientNetLite to classify patches of 200x200 that are cropped from the larger images and to which we refer as tiles.

The .zip file containing the dataset is organized as follows:

Agricultural: 10 tiles for the Agricultural class.Cloud: 10 tiles for the Cloud class.Mountain: 10 tiles for the Mountain class.Natural: 10 tiles for the Natural class.River: 10 tiles for the River class.Sea_ice: 10 tiles for the Sea Ice class.Snow: 10 tiles for the Snow class.Water: 10 tiles for the Water class.images: 26 unprocessed raw OPS-SAT images.

It is important to highlight that not the image itself but its tiles are the target of classification. Depending on the cropping, about 100 of such tiles can be extracted from an image and thus one image can contain tiles of multiple classes. However, each tile has exactly one of the 8 classes associated. The public data is provided as it is and can be used by the participants however they like.

A hidden evaluation dataset containing several labeled tiles is stored on our servers unaccessible to the public and used for scoring of your submissions. This hidden evaluation set was generated by a split of the OPS-SAT images. Consequently, there is no tile (labeled or unlabeled) of the evaluation set directly available to you. In other words, none of the tiles or images of this dataset are part of the evaluation and predicting their class correctly (or incorrectly) does not change the score your submission will achieve.

This is quite different from some of our previous Kelvin's challenges, where the score was typically computed from a submitted inference regarding the unlabeled part of the dataset. For this competition, instead of submitting such inferences, you are asked to submit the model parameters of an EfficientNet-Lite0 instead. The Kelvins server will then "simulate" the OPS-SAT satellite by instantiating this neural network architecture, loading your submitted parameters and run inference for several tiles of the hidden evaluation dataset "onboard".

You can find more details about this process under the submission rules for this challenge.

Preview

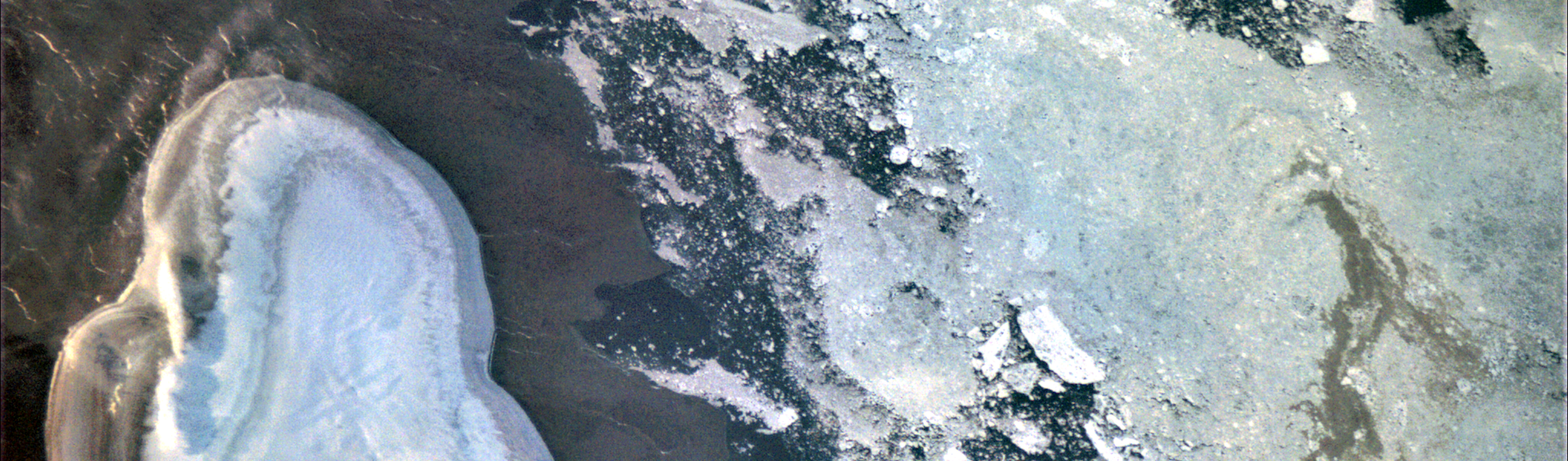

Here are some examples of labeled tiles that you will find in the dataset, starting from left to right: Mountains, Agricultural, Sea Ice, River.

Getting started

We provide all teams with utility scripts that will help you working with the dataset and generate submissions. You are invited to clone our Gitlab repository for this purpose. Our codebase also includes a Jupyter notebook that closely resembles the evaluation procedure that we apply to your submission on our server.